How we saved a $2000 per month in inter AZ data transfer cost in AWS

How we saved ~$2000 per month in inter AZ data transfer cost in AWS while keeping HA prometheus monitoring

In my organisation we've 300 microservices running on 80 odd nodes in our kubernetes cluster, 90% of them uses istio for communication. When we enabled istio for our microservices, we also enabled prometheus metrics scraping for istio, to understand traffic patterns in our service mesh and to setup alerting based on these metricses.

After this configuration changes we observed that our EC2 data transfers costs shoot up to the roofs. We saw that inter AZ data transfer costs are more than $2k+, it tooks us while to understand the root cause. So promethues was scraping metrics every 10 secs from all of the istio-proxy sidecar containers, running with application pods and we had around 1200+ pods running on 80 nodes. As promethues was running in 1 AZ and pods were scattered on nodes running in 2 different AZ's, generating high volume data transfer. Although in AWS data transfer costs in same zone are free, but inter AZ data transfer costs are charged per GB transferred.

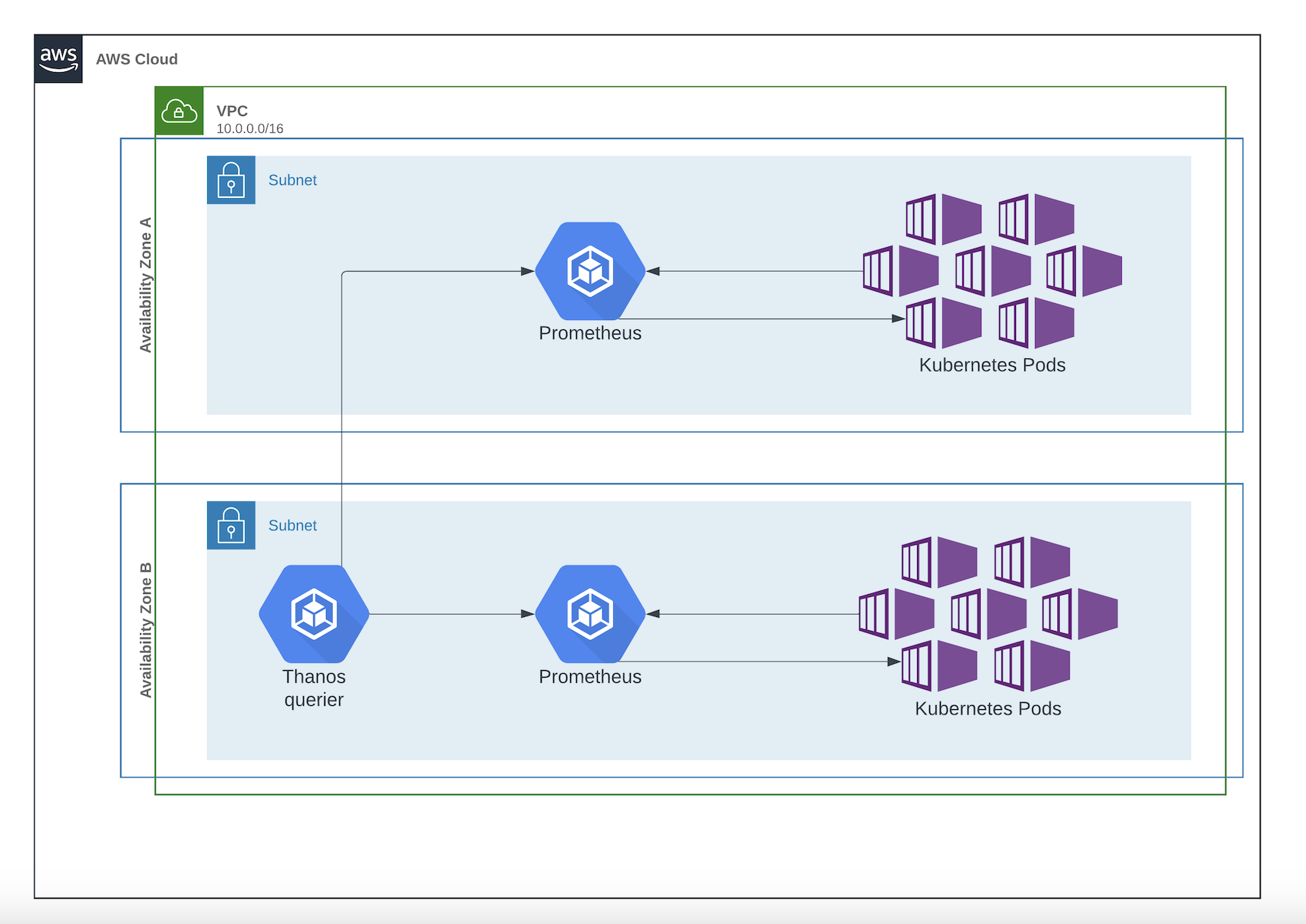

Architecture (Before):

We were not in position to stop promethues data scraping as telemetry and metrics data was important for monitoring and alerting for services. So to solve problem we decided to make few changes in architecture of istio monitoring stack.

Solution-

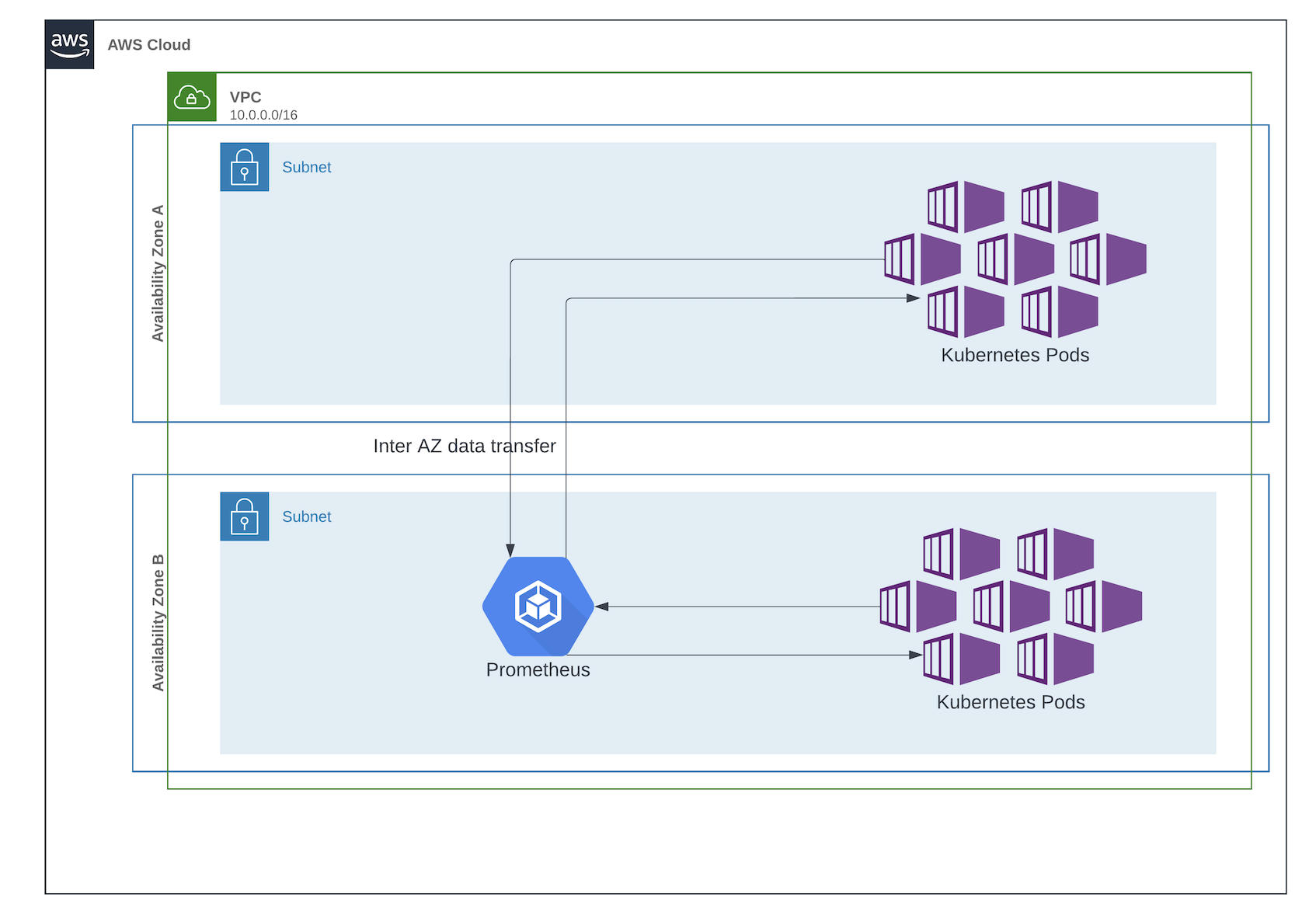

- We did setup of 2 promethues clusters, one prometheus in each AZ. Idea is that promethues will scrape metrics from istio-proxy containers running in its own availability zone only.

- Pods didn't had availability zone label, so We also created a kubernetes operator to intercept pod creation call and add avaibility zone label on pod at the time of pod creation.

- Now every pod has availaability zone label and in prometheus scrape config we have specified codition to scrape metrics of pod having availability zone label, in this case there is no inter AZ data transfer charge for scarping metrics. And to query metrics we used thanos, which executes query on both proomethues to return unified results.

Architecture (After):